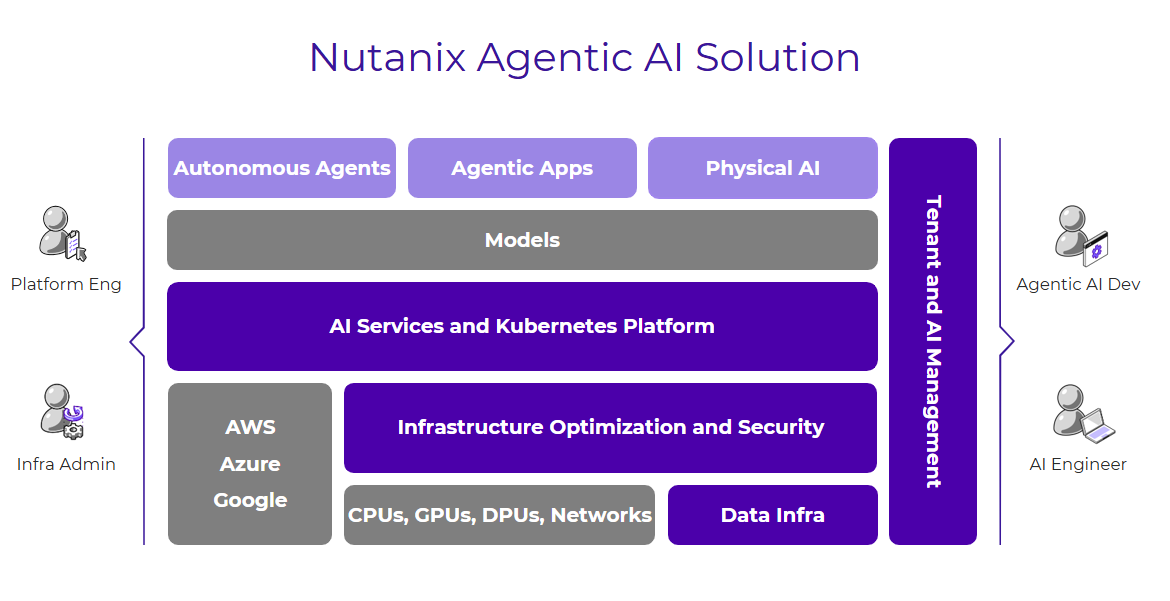

The Nutanix Agentic AI solution abstracts complexity and creates a seamless bridge from the agentic AI builders to the AI factory operators. This full-stack solution offers a cloud operating model for AI factory operators by simplifying operations, maximizing performance and security, and optimizing token costs. At the same time, it enables the agentic AI builders to focus on innovation, model management, and rapid inference scaling.

Agentic AI

Your AI Factory Needs Infrastructure That Actually Works

Most AI platforms promise AI at scale, but deliver complexity. Nutanix Agentic AI is a full-stack software solution that provides a cloud operating model to help organizations build, operate and govern AI factories. Through integrations with NVIDIA's accelerated computing ecosystem, the solution simplifies operations, maximizes performance and security, and optimizes GPU utilization and token costs.

A Cloud Operating Model for AI Factories

Nutanix delivers a cloud operating model specifically engineered for the era of AI coworkers running on AI factories. By abstracting complexity and helping IT decision-makers balance performance, security, and cost, the Nutanix Agentic AI solution does more than just simplify operations; it fundamentally optimizes the economics of AI.

AI Services and Kubernetes Platform

Advanced AI Gateway and Inference Services

A unified, secure inference endpoint lets enterprises use cloud-hosted models (and token credits) alongside private LLMs with consistent authentication, observability, and token-based rate limiting.

Model Context Protocol Support and Fine-Tuning

Nutanix Enterprise AI extends its existing robust Model-as-a-Service (MaaS) capabilities to enable agents to securely connect to enterprise tools and data sources.

Open Kubernetes Platform with Rich AI Catalog

Move Agentic applications from concept to production without infrastructure delays using a pre-validated catalog of open-source AI services, including notebooks, vector databases, and MLOps engines. The solution is natively integrated with NVIDIA AI Enterprise to allow developers to instantly deploy NVIDIA NIMs, including Nemotron, to accelerate the development of high-performance AI applications in production.

Infrastructure Optimization and Security

Topology-Aware Optimization

The Nutanix AHV hypervisor ensures strict hardware alignment without the complexity of manual infrastructure tuning for maximum performance, security and resource utilization by automatically optimizing workload placement across GPU-dense servers.

DPU-Accelerated Zero Trust Networking

Leveraging Nutanix Flow with new DPU offload capabilities delivers the raw speed of bare metal with the sophisticated isolation of a virtualized environment with a high-performance, zero trust network foundation that maximizes throughput while ensuring the secure, reliable flow of data across the AI factory.

Air-Gapped Lifecycle Management

The solution supports fully disconnected installations of the entire NKP platform and the NVIDIA GPU and Network Operators allowing highly regulated or defense-sector environments to automate driver updates and network optimization without exposing the cluster to the internet.

Foundational Data Services for AI

Linear Scalability

As an NVIDIA-Enterprise Certified AI Data Platform, Nutanix Unified Storage delivers high-speed read/write performance across thousands of GPU clients, ensuring that data availability scales as fast as your compute.

Advanced Throughput

Ensures that GPUs are never “starved” for data by leveraging NFS over RDMA and soon S3 over RDMA to provide a low-latency data path.

Cost Optimization

Reduces the aggregate cost per token and frees up critical GPU memory by providing a high-capacity tier for KV Cache offloading, allowing you to process significantly larger context windows and more concurrent users without a performance penalty.

Nutanix is Trusted by

Related Products

Explore our Top Resources

Frequently Asked Questions

Agentic AI builders face a high degree of "innovation friction" as they navigate a fragmented landscape of models, tools, and data silos instead of focusing on building intelligence. Developers lack a unified, secure path to leverage diverse LLMs and open-source tools to rapidly evolving applications from simple chat interfaces into sophisticated agentic AI capable of driving real business outcomes.

For AI factory operators, the biggest challenge is delivering business value measured in terms of time to tokens and cost per token due to operations complexity in AI factories such as:

- Complexity in managing diverse and rapidly evolving AI hardware (GPUs, networking, storage),

- Complexity of providing shared access to critical AI infrastructure while ensuring secure access to model and data, and complying with sovereignty requirements

- Complexity of consistently delivering maximum performance, while optimizing resource utilization across the full AI factory.

- Complexity of managing the lifecycle of fragmented, bespoke point solutions supporting AI factory operations

The Cloud Operating Model is Nutanix’s approach to bridging the gap between AI developers and infrastructure teams. Instead of managing fragmented point solutions or complex bare-metal clusters, this model provides a unified, full-stack environment. It allows operators to govern AI infrastructure (GPUs, DPUs, and storage) with the same ease as a cloud service, while giving developers instant, secure access to the tools and models they need to scale thousands of intelligent agents.

Nutanix optimizes token economics through several integrated efficiencies:

- Topology-Aware Optimization: The AHV hypervisor automatically places workloads across GPU-dense servers to maximize hardware alignment.

- Resource Offloading: Using DPUs (Data Processing Units) to handle networking and security tasks frees up GPU cycles specifically for inference.

- Smart Storage: Nutanix Unified Storage provides a high-capacity tier for KV Cache offloading, which saves expensive GPU memory and allows for larger context windows without a performance penalty.

While bare-metal was the standard for initial model training, it often lacks the security and isolation required for scaling agents in an enterprise. Nutanix uses VM-based Kubernetes infrastructure to provide:

- Superior Isolation: Stronger multi-tenancy and security boundaries between different AI workloads.

- Management at Scale: Easier lifecycle management and resource allocation.

- Bare-Metal Performance: By leveraging DPU acceleration and topology awareness, Nutanix delivers the speed of bare metal with the governance of a virtualized environment.

The NAI Gateway acts as a secure "front door" for all AI models. It provides a unified inference endpoint that allows enterprises to manage cloud-hosted models and private LLMs in one place. Key features include:

- Governance: Token-based rate limiting to prevent "bill shock."

- Observability: Full visibility into who is consuming resources and how.

- Connectivity: Support for the Model Context Protocol (MCP), which allows agents to securely connect to private enterprise data and tools.

The solution reduces "innovation friction" by providing a developer-centric environment where they can bypass infrastructure setup. Through the Nutanix Kubernetes Platform (NKP), builders gain access to a rich AI catalog including:

- Pre-built open-source tools (Notebooks, Vector Databases, MLOps engines).

- Instant deployment of NVIDIA NIMs and the NVIDIA Nemotron family of models.

- 1-click secure inference endpoints and turnkey access to fine-tuning services.

Nutanix Unified Storage provides a scalable, high-performance data platform purpose-built for modern workloads like AI and next-gen apps. Key capabilities include:

- Ultra-fast read throughput and dense all-NVMe capacity to handle massive datasets for AI pipelines, including Inferencing and Retrieval-Augmented Generation (RAG).

- Integration with Nutanix Kubernetes Platform, enabling seamless deployment of containerized AI/ML pipelines and cloud-native applications.

- Multi-protocol data access, simplifying storage for diverse workloads and accelerating innovation.