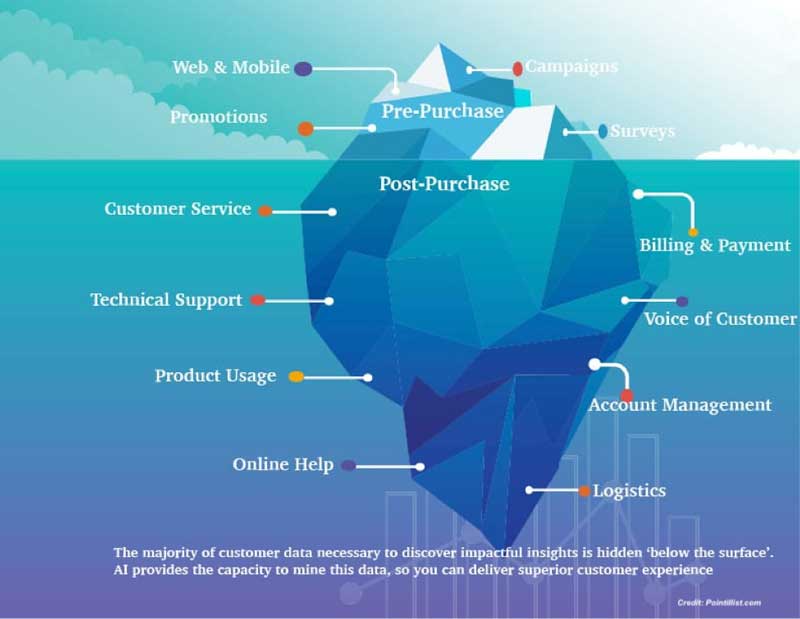

To ready any enterprise for big changes, learning customer expectations is essential. While much time and research is spent in understanding the customer, adding the power of Artificial Intelligence (AI) to the corporate toolkit can produce answers that organizations don’t even know their customers need.

Think of it this way: customers only tell so much about what they really want and feel; their behavior tells much more. AI has the capability to analyze huge amounts of data for behavioral patterns of consumers. These patterns in turn reveal insights and opportunities that the business might have never considered before.

Source: Pointillist

This data resides “below the surface” of direct interactions; it cannot be easily discerned by humans. Without the capabilities to analyze the vast amounts of data these interactions reflect, such patterns will remain unseen. But things change once Big Data is introduced to the equation and predictive forecasting is applied to customer service.

Envision the Customer Experience

While AI offers many potential benefits, having a clear understanding of how the business wants to serve its customers is paramount to succeeding with AI. Cost-cutting measures are often the earliest adoptions, but greater value is found in helping customers recognize a need they may not have fully considered yet. These include saving for college, retirement, and estate planning, among others. Modern tech like cost

AI can recognize experience gaps in consumer data and browsing history, and present opportunities to bridge them with suggestions. This is quite different from throwing the most recent sales challenge at customers and accepting a 1-2% response as reasonable.

AI-enabled engagement is deeply personalized, thereby gaining trust and acceptance. “By understanding the customer better, we will be able to develop a new set of services that will help retain them and attract more,” said Yves Le Gelard, Chief Digital Officer at ENGIE, an energy company with 21 million customers across Europe.

While there are numerous use cases of AI adding value to the customer journey, customers also need to be ready for it. In a recent survey of 6000 people, 28% suggested they would be uncomfortable with a business that used AI to improve interactions. The time is ripe to leverage AI to reach the customer base with relevant, meaningful offers.

Put People before Profits

CXOs focused on driving up revenue and profits can sometimes overlook the fact that AI has the potential to improve working conditions, cut down on routine tasks while making others easier for staff. Process automation, chatbots, recommendation engines, support systems, etc. simplify life for employees, increase productivity, and free up time to focus on where human intervention is more significant for decision making.

Employees are the best people to collect, mine, and analyze customer data. Organizations must use insights from customer-facing staff to identify opportunities as well as get answers needed for improvement and growth. Armed with data, AI can run deep analysis, pinpoint problems, “learn” what works, and turn solutions into processes much quicker than human beings.

Each business unit can have a small group of Data Advocates, individuals who know what data is available to the organization, understand how it is collected, how it flows, and how it can be used. These groups should collectively share what they know with their fellow advocates from other units and data scientists building AI systems. Having a corporate Chief Data Officer (CDO) is also important for executive sponsorship of the project. CDOs alone won’t have answers to all pressing questions though; they’d need department-level experts to assist and guide their decisions.

Identify and Prioritize Issues to be Solved

Identification of the major issues plaguing the customer journey is the first step to smoothing it out. The organization has to then decide which ones to tackle first, and the areas in which AI will be most beneficial in addressing customers’ intent.

AI isn’t some magical technology from the future. It is here now. While a lot of organizations see fast and concrete results, others may not. However, the amounts of data processed by AI will definitely provide more understanding and make outstanding issues easier and faster to address.

Businesses must go after the high-impact, low-effort, minimally process-invasive items first. Work backwards from business goals such as higher rate of customer acquisition, greater order value, or more brand loyalty and advocacy.

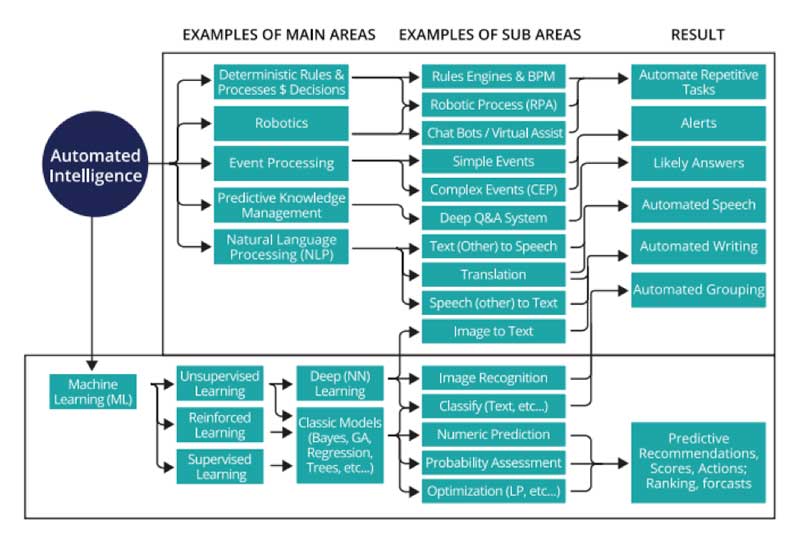

Source: Pega

Start Small and Specific, Then Scale Up

Businesses looking to map their customer journey on the back of AI-driven systems and processes can start by focusing on Narrow AI – algorithms that can only really provide good answers to a very specific set of questions. This enables a deeper and richer customer experience early on.

Think of Narrow AI as a specialist – like a neurosurgeon who is very good with brain injury but is clueless when consulted about stomach pain. Similarly, first builds of AI models in an organization can be specialized. As they continue to grow in number, complexity, application and usage, they can be bridged and functionally updated to know when to hand off interactions to a different AI or a human.

Mobile carrier Sprint realized a 10% reduction in subscriber churn using a real-time AI they had up and running in only 13 weeks. Agile and DevOps approaches with a quick-win focus can bring results like these.

A common (and excellent) example is the use of chatbots to cut down on customer wait times. These can start as simple, response-coded bots that incorporating ML as data becomes available. They can then evolve into complex chatbots that reference the customer’s history of interactions, including purchases, complaints, and external touch points, to craft appropriate responses. The bots can also recommend solutions or products to accounts that are most likely to purchase at relevant times. This opens up time for support and service staff to address higher-level customer issues.

AI-based deep learning is compute- and storage-intensive. As more data comes in, enterprises will need multi-GPU parallel processing capabilities for training and testing of AI models. Reliable and flexible storage capabilities will be needed to access and mine a large dataset. The training process is iterative, so the total AI workload grows over time. This means the enterprise will need an all-encompassing AI/ML solution that

Ensures hardware and software can be managed and upgraded as and when necessary without disrupting the working of the model

Delivers local compute for IoT and edge devices

Doesn’t need re-architecting as algorithms or data sources scale and the model grows in complexity

Makes it simple to manage application lifecycles and push changes without manual intervention

Ensures security of sensitive data without the need for dedicated infrastructure

Evangelize and Organize the Data

As AI models within enterprises or even SMBs scale up and out, burning questions about the structure and organization of data arise and need to be answered. Where does the data live? How is it formatted, stored and accessed? What cloud infrastructure – private, public, or hybrid – is right for managing data and running the AI applications?

An article on MIT Sloan Management Review divides data used in training AI models into three categories:

The Trusted pool: Data that is current, reliable, relatively complete, and validated for use in training. Having a good taxonomy and tagging system is important here, particularly with digitized data.

The Queued pool: Data that may have validation needs, or be incomplete or inaccurate in some cases. This might be useful for training after vetting. Data received through merger and acquisition should always start here. Stores will move up or down this list as they are reviewed.

The Naysayer pool: Data that is known to be outdated, contain errors and corruptions, may contain biased data or information not acceptable to be used in a given location or regulatory environment (think GDPR).

AI can turn an enterprise with many data silos into a single persona for customer interactions. The complexity of multiple cloud and on-premise data stores combined can be daunting, but it doesn’t have to be. Seek to simplify access and management through a “single pane of glass” wherever possible.

Evaluate, Update, Transform

Adding AI to the organization introduces opportunities and uncertainties that have never been thought of previously. Discerning the difference between a game changing insight and an anomaly could jettison the business forward. This effort might turn into a major project of its own if the business doesn’t have a digital transformation strategy in place, but will pay long-term dividends on numerous fronts once implemented.

With great rewards come great risks. However, when done well, the rewards far outweigh risks. The greater risk is in not engaging in available technology. The secret to effectively using AI to engage and satisfy customers is to align the production of AI with the consumption of AI at every stage and touchpoint of their journey.

Deciding when, where, and how to start are the first steps to moving into the AI-driven enterprise category. Considering the pace of adoption in every market, industry, and vertical, now is certainly the time.

Featured Image: Pexels

Dipti Parmar is a contributing writer. She has written for CIO.com, Entrepreneur, CMO.com and Inc. magazine. Follow her on Twitter @dipTparmar.

© 2020 Nutanix, Inc. All rights reserved. For additional legal information, please go here.