As businesses grow, their computing needs to grow with them. This means more applications and software to use and work on, increased data storage requirements, and increased networking needs. A popular way to navigate this growth is to move to the cloud, leverage its capabilities to the fullest, and scale away. However, the cloud brings with it a host of other issues that make adopting it somewhat of a challenge for the untrained IT professional.

On the other hand, as the size and complexity of the business grows, keeping one’s on-premises IT infrastructure operating can become an increasingly expensive task. Yet, many highly regulated industries are loath to leave the security and ease-of-use of existing on-premises systems and barter it for the flexibility of the cloud.

Enter hyperconvergence.

What is Hyperconvergence?

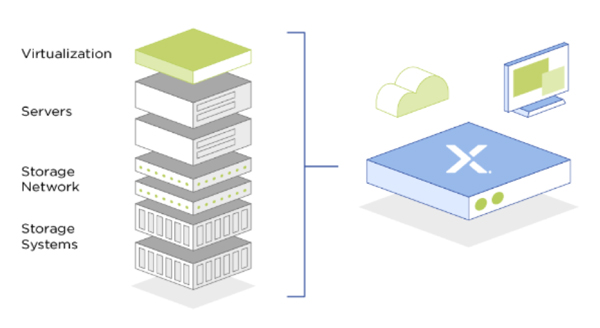

Hyperconvergence brings together all the moving parts of an organization’s IT setup and combines them under one umbrella. From computing to database storage to connecting all the networks and servers that house these applications and data, everything fits right in with a hyperconverged infrastructure setup.

Not only does it bring together all the different computing, storage, and networking resources of a company under a single roof, it also makes managing each of these critical components of the IT infrastructure easier and more efficient.

Source: ZDNet

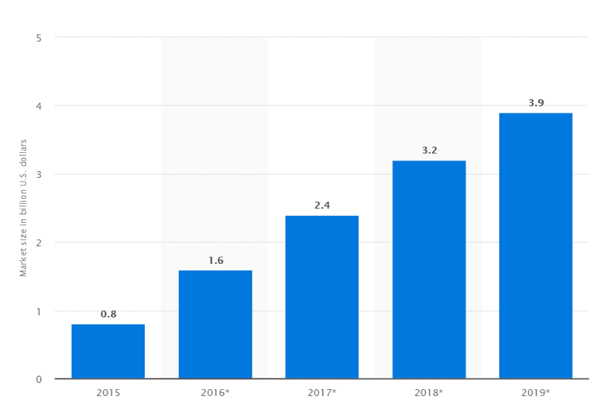

While industry analysts figures vary, Statista reports that the market for hyperconverged solutions has more than doubled in the last three years to nearly $4 Billion US.

Source: Statista

True hyperconvergence brings some critical benefits to operations and IT processes in the enterprise.

Simplicity and Ease of Use

As organizations grow, their IT infrastructure resembles a patchwork quilt of different pieces of hardware and software that have been cobbled together to suit changing needs. Since many of these systems were never meant to work together initially, slow, inefficient performance, plagued by system failures become a foregone conclusion.

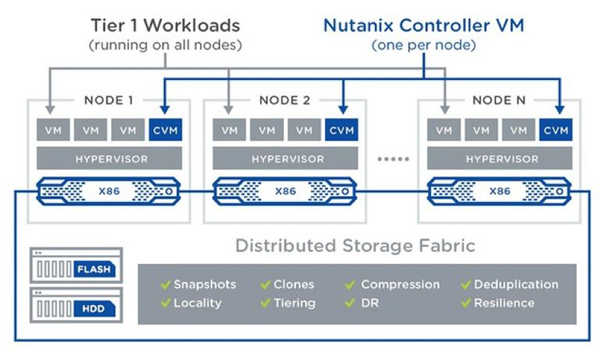

HCI works on the principle of virtualizing all aspects of the IT infrastructure. It does away with the need for hardware storage and processing components, relying instead on virtual machines for operations and management of the system. HCI offers a single pane of control for IT staff to view, manage and control all their operations across functions like computing, storage, and networking.

Again, unlike traditional systems, where the IT staff would be dealing with multiple vendors and incongruent technologies for each operation, a single point solution such as Nutanix Prism brings everything into a single control panel, including storage and compute infrastructure all the way up to virtual machines. This software is designed to streamline maintenance and upgrades, simplify workflows and consolidate visibility into cluster statistics.

Source: Nutanix

HCI uses commodity hardware instead of specialized equipment as in legacy data centers. This is also ideally supplied by the same provider and controlled by a single software management platform, making the job of maintaining and operating the network a smooth experience. From vendor management to system upgrades to procurement and payments, everything gets simplified down to the basics with an HCI implementation.

Cost Savings

The building block approach taken by HCI to start small and expand capacity in small increments, only as per demand from internal customers, keeps costs low and manageable. IT staff don’t have to worry about earmarking resources for future needs or buying more capacity in advance, thus eliminating unnecessary upfront expenditures. This low-cost approach also reduces barriers to entry, making HCI an affordable IT management option not just for large, billion-dollar enterprises, but also for SMEs.

The equipment used in HCI is not specialized or custom-designed — it’s widely available hardware that does the job without any fuss. This further lowers the cost of acquisition, implementation, and operation of the system. Another perk is the fact that much of the hardware of a legacy data center is dispensed with and replaced with software that manages the network, making it easier to upgrade and maintain without the need for expensive repairs and replacement of parts.

The cost advantage that cloud-based hyperconvergence offers is clear: less wasted capabilities mean that valuable time and resources can be spent on critical projects. There is an average TCO savings of 40% across industries.

Efficiency and Improved Performance

Continuing on the earlier theme of doing more with less, hyperconverged infrastructure helps organizations operate more smoothly and efficiently.

Since hyper-specialized legacy systems are replaced with basic commodity hardware, more gets done with fewer resources. The single pane of visibility to all the various tools, applications, databases, and more helps prevent waste and promotes better resource utilization.

Further, the “time to value” can be cut down drastically – you can go from unboxing to running virtualized application in just a few hours. Planned software updates and modular scaling also require less time, while resiliency is built in.

Improved Security

One of the big downsides of the public cloud is the perceived lack of control over data and systems; second-tier cloud service providers could have erratic policies for securing data.

With hyperconverged infrastructure, companies enjoy the perks of the public cloud-like scalability and flexibility without the security risks. Not only is the network private and closed off to outside contact, but system administrators can also define and control data security policies and measures across the network.

HCI is inherently designed with the assumption that hardware is unreliable and prone to failure. Therefore, it comes built-in with data protection features like snapshotting and data deduplication that automatically create backups of data and failover support for resources.

Data is typically spread out across nodes throughout a hyperconverged data center. This distributed model means a failure in one part of the network does not bring the entire system to a halt. Resources from other nodes can be used to reliably continue services and maintain uptime without affecting system performance or availability.

HCI is the New Cloud

Businesses of all sizes are catching on to the benefits that HCI offers and getting on board by the dozen. Security-oriented industries like healthcare and government agencies are testing the HCI waters for the twin benefits of reliably high performance with strong data protection features. It won’t be long before HCI competes with the cloud in this battle (and wins) for enterprise data management and IT supremacy.

Dipti Parmar is a contributing writer. She has written for CIO.com, Entrepreneur, CMO.com and Inc. magazine. Follow her on Twitter @dipTparmar.

© 2019 Nutanix, Inc. All rights reserved. For additional legal information, please go here.