Maintaining a grip on the complex world of enterprise hardware and software is not for the faint of heart. Relentless tidal waves of technology innovation mean businesses are in a constant state of digital flux. Keeping up requires a steadfast focus on change and an ability to connect the dots quickly and anticipate trends as they develop.

That’s what it has taken to succeed for decades as a technology analyst in the digital age.

"Every generation or half generation, something comes along and upends the apple cart," said Jean S. Bozman, vice president and principal analyst at Hurwitz & Associates in Palo Alto, Calif.

She’s seen the apple cart upended time and again over the past three decades, often from a front row seat at the biggest technology events through the years, including those of Apple, Amazon Web Services (AWS), Google, HP and HP Enterprise, IBM, Intel, Microsoft, Oracle and Red Hat.

Powering companies into the digital future is a new wave of technologies from suppliers large and small. Bozman writes about hardware, software and software-defined infrastructure technologies. She dives into virtualization, cloud computing and hyperconverged infrastructure to report on their purpose and potential impact. She crystalizes trends and informs CIOs and IT professionals about new products and services that could become critical to their business.

Cloud Disruption

"We're experiencing a collision of silos, where things that were once built separately are coming together," she said, which is leading to the need for IT simplification and cloud migration. Continual waves of change are hallmarks of the technology industry. But Bozman posits that the rate of change has intensified as more companies rely on the Internet and cloud infrastructure.

Some reports project that the global information technology industry will reach as much as $5 trillion in worldwide revenue in 2019. Increasingly, cloud computing is a significant part of that total revenue, and it’s expected to grow from $36.7 billion in 2019 to more than $180 billion by 2024.

Bozman said that a confluence of many IT technologies has made it possible for cloud to become so pervasive in a short time. It’s been 10 years since the worldwide financial downturn accelerated the move to cloud, converting data center costs into operational expenses (OpEx), which could be more easily contained than capital expenses (CapEx).

Cloud computing is bought and sold in a utility model, like water or electricity. Companies use it to develop and run applications, store and retrieve data and scale computing resources up or down, as needed. Infrastructure as a service (IaaS) kicked into gear around 2008, shortly after Amazon Web Services (AWS) launched its Elastic Compute Cloud (EC2). Since then, IaaS and its cousins, software as a service (SaaS) and platform as a service (PaaS), have become one of the fastest growing enterprise tech innovations since the birth of the Internet in the 1990s.

[Related: Read the Nutanix Enterprise Cloud Index report to learn what 2,300 IT decision makers from around the world are saying about hybrid cloud.]

"There are many stepping stones that take you to the cloud reality that we have right now," Bozman said. "Over the years, it took multiple innovations in hardware, networking, software virtualization and the Internet to get to what we have today. Now you can put these things together and have not one cloud, but many clouds, supporting your business."

We're experiencing a collision of silos, where things that were once built separately are coming together.

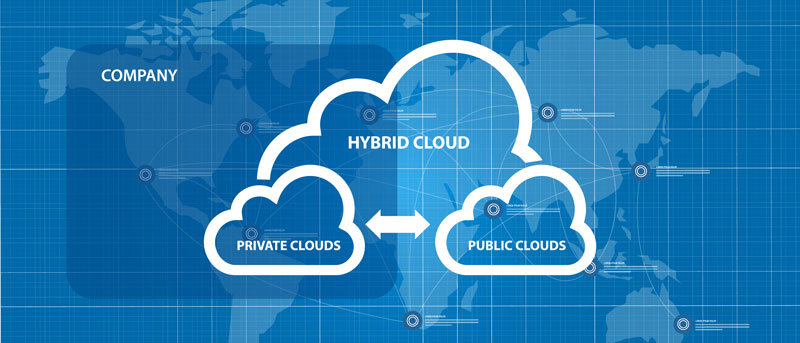

Bozman helps make sense of private cloud, public cloud, hybrid cloud and multicloud technologies so companies can create the right cloud strategy. She said cloud is forcing companies to rethink how they manage their data systems, which increasingly rely on a mix of on- and off-premises infrastructure.

This disruption is palpable but not surprising. Disruption has become synonymous with technology innovation. Bozman pointed to Microsoft founder Bill Gates and the late Andy Grove, CEO of Intel, as two industry leaders who articulated the technology disruption phenomenon, including Grove’s motto, "only the paranoid survive."

Gates gave a seminal speech at the 1993 Comdex event called “Information At Your Fingertips,” in which he shared a vision of Internet browsing for the next 10 years. Bozman said that speech and Gate’s 1995 book, The Road Ahead, painted a picture of the future, and much of it came true.

"Gates knew that modern technology would require fundamental change every five to 10 years," she said. "He explained how the Internet would be really disruptive, perhaps in a creative way. It would affect the entire value proposition of what was being offered not only by his company, but by others."

But a lot of the heavy lifting came from a wide variety of companies, according to Bozman. She listed Netscape (GUI-based browser); Sun Microsystems (Java); SGI (visualization graphics); Red Hat and SUSE with enterprise Linux; Google, Docker and Sun (containers); VMware (virtualization); and Cisco, Broadcom, Brocade and Arista (routers and switches), among others. Importantly, Microsoft and Sun eventually forged more platform interoperability for Internet developers, who were on their way to widely adopting Linux-based technologies. One sign of this technology sea-change is that today, Microsoft owns Github, the app developer repository for code, and Microsoft Azure runs Linux and Windows.

Bozman pointed out that despite being criticized as a latecomer to cloud, Microsoft hustled to make Azure the second largest cloud service provider.

She remembers seeing technology demos in the 1990s led by Grove that showed early capabilities of the Internet, including video chat. She said Grove’s decision to get Intel out of the DRAM business — memory chips that drove the bulk of Intel’s business — and focus on microprocessors changed the course of computing for the masses.

Intel’s processors not only fed the PC and server revolution of the 1990s and 2000s and later the cloud computing revolution, it was emblematic of the kind of radical risk-taking required at times to keep a technology company from being surpassed by more innovative competitors. Bozman said the majority of servers and storage devices used in enterprises and cloud service providers today are based on Intel x86 microprocessors, though she noted that IBM Power, AMD processors and Nvidia GPUs play strong roles, too.

"These two visionaries – Gates and Grove – don't take us to cloud, but they saw big change coming ahead of many others," Bozman said.

The Internet and Quest for Interoperability

Data centers in the ’80s and early ’90s typically had large mainframe computer systems. Many of them, Bozman noted, remain vital mainstays of transactional computing at the heart of Fortune 500 enterprise data centers. Back then, however, these systems were often monolithic and not connected to one another, the way they are today. In the 1990s, scale-out and clustered systems based on Unix and Windows began to surround mainframes in many enterprise data centers, she explained.

"They were all good on their own, but clearly there were some disconnects," Bozman recalled. "Those systems did not easily speak with each other. There were ways you could force it, but it wasn't easy."

But change was inevitable, as Microsoft embraced interoperability with Linux and Unix, and IBM made multi-billion-dollar investments in Linux, which today extends across its product lines. Bozman credits CEOs like Microsoft’s Bill Gates, IBM’s Lou Gerstner, Intel’s Andy Grove and Salesforce’s Marc Benioff, among others, with charting new paths after seeing where the computing world was headed via the Internet. Eric Schmidt, now chairman of Alphabet, helped further system interoperability by pursuing Java ubiquity, on all types of systems, as head of Sun’s Technology Group (STG) in the 1990s.

Internet Standards Help Build Cloud On-Ramp

When the Internet came along, it provided a layer that eased interoperability between most systems, Bozman said. Going from the Internet to virtualized data centers in the 2000s to commercialization of cloud computing in the 2008 time frame were all giant stepping stones that drove companies to modernize their IT.

For the past decade, Bozman has seen these factors fuel a growing desire for hybrid cloud and, now, multicloud services. Businesses are using a combination of private clouds and public cloud services from a variety of providers. One reason they’re tapping different clouds is to avoid becoming overly dependent on any one cloud. That’s a move that wouldn’t have been possible without Internet standards, such as HTTP, TCP/IP, and World Wide Web Consortium (W3C) protocols.

Still, it’s not so easy for companies to work across a mix of different cloud services. The many technology silos inside traditional enterprise data centers have important information and applications that need to connect with public cloud, said Bozman. "We're in that era when we're tying together traditional enterprise data center workloads, applications and databases with the new stuff like IoT and cloud." Integrating enterprise data centers with multiple cloud services is becoming easier as cloud-native development and DevOps best practices become widespread.

Paying for "X as a Service"

When cloud services came along around 2008, companies could use their operational budget (OpEx) to pay for cloud services rather than making large upfront capital investments (CapEx) in hardware and software for their on-premises data centers. Bozman said most of the cloud migration was sparked by application developers and business units with immediate needs to test new code and manage their costs.

"They were saying, ‘I just want to get this problem solved. I don't care where this workload runs. I want to pay for it with my money, and I'll pay that service provider to do it,’" said Bozman.

Back in the 2000s, she said, businesses realized that cloud was great for testing and development, but for security reasons, many were reluctant to run mission-critical workloads in public cloud. As others made cloud more of a factor in everyday operations, they learned that it wasn't as inexpensive as they had initially believed. Ongoing payments to cloud service providers added up when enterprise applications and enterprise data were involved. This realization drove many companies to "cloud-ify" their own data centers; in effect, turning them into private clouds, Bozman said.

"People started building private clouds inside companies because they didn't trust outside cloud providers,” she said. “But data security and enterprise-level applications have made all of this better. Now, using cloud as a compute, storage and networking resource is very widespread, whether it's private, public, or hybrid cloud." Most businesses find a strategy for selecting and leveraging a combination of cloud services, she said.

"The next step is to have a multicloud strategy, because having just one cloud provider is not enough for many organizations. Getting information smoothly from different cloud services will be an important challenge to solve. Fortunately, standardization of connections is making that easier."

When Netflix Flicked he Switch

Bozman said the moment when everyone knew pubic cloud had what it takes to power a global company was when movie and video streaming service Netflix went big on AWS. Netflix turned to AWS in 2008 to build out a more distributed data system that could better handle downtime threats. This is very important for a cloud service, which supports the peaks for regional time zones (Europe, Asia/Pacific and North America) on a 24 x 7 basis, as the world turns and nightfall begins in each region. By 2016, the company’s IT system was fully reliant on AWS, allowing it to launch Netflix in 130 countries and attracting millions of new customers.

"That was a tremendous workload for Amazon’s AWS to gain, and it continues to handle it well," said Bozman. Today, AWS is the leading cloud provider worldwide by revenue and audience, followed by Microsoft Azure and Google Cloud Platform (GCP).

Just as Amazon invented the business of leasing its own infrastructure, Netflix showed the world another kind of invention: big data-dependent businesses can run on cloud services. If every company is a technology company, as Andy Grove said decades ago, then that means every business can run on cloud services. Meanwhile, Bozman is watching how it all plays out…and writing about it.

Ken Kaplan is Editor in Chief for The Forecast by Nutanix. Find him on Twitter @kenekaplan.

Editor’s note: Find Jean Bozman’s work on the Hurwitz & Associate site and read her white paper Nutanix's Approach to Deployment -- Driving Infrastructure Change to Support Business Culture.

© 2019 Nutanix, Inc. All rights reserved. For additional legal information, please go here.