Disclaimer

This blog is intended for informational purposes and is aimed at technical audiences. The concepts and opinions expressed are those of the author and do not necessarily represent the views of Nutanix. Readers should always validate information against their own specific environments and requirements.

Adopting Containers: What Infrastructure Admins Need to Know

The infrastructure landscape is undergoing a fundamental transformation. Organizations are adopting containerized applications at a massive scale.

For infrastructure administrators who have spent years mastering virtual machines, storage arrays, and traditional data center operations, this shift raises critical questions: What exactly are containers? How do they differ from the VMs we know so well? And most importantly, how does this change our role as infrastructure professionals?

This guide is written specifically for infrastructure administrators adopting container platforms. We will look at the specific architectural differences between VMs and containers, focusing on what matters most to an admin: Isolation and the new operational model.

Your Changing Role as an Infrastructure Administrator

The transition to containers changes what "infrastructure administration" means. You're moving from managing individual compute instances to operating the platform that runs all applications. This is a higher level of abstraction but also provides greater leverage—your work enables hundreds of developers and thousands of applications.

While your foundational skills in compute, networking, and storage are still essential, you'll need to expand your toolkit to include Kubernetes® administration, declarative configuration with YAML, and GitOps workflows.

Deep Dive:

Architectural Isolation

To understand containers, you must look at the specific layer where virtualization occurs.

- Virtual Machines create complete isolation by virtualizing the entire hardware stack. Each VM runs its own operating system, complete with its own kernel, system libraries, and binaries. A hypervisor sits between the physical hardware and the VMs, allocating resources and maintaining strict isolation. This architecture mirrors the physical server model we've used for decades.

- Containers, in contrast, virtualize at the operating system level. Instead of running multiple OS instances, containers share the host operating system's kernel while maintaining isolated user spaces. Think of containers as lightweight process isolation mechanisms that package an application with just its dependencies—not an entire operating system.

Deciding when to run your applications on containers

The choice between a virtual machine and a container essentially boils down to one question: do you want your workload to have its own dedicated kernel and virtual hardware, or do you want a shared kernel for every workload on the box? While type 1 hypervisors, such as Nutanix AHV, remain essential for many cases, the push toward containers isn't just about cost; it is about achieving consistent builds and a seamless way to deploy new application versions.

Think of a container as a packaging solution for an application—one that can run on bare metal or within a VM anywhere.

The Operational Impact: What Changes for You?

Transitioning to containers fundamentally changes what "infrastructure administration" means. You are moving from managing individual compute instances to operating the entire platform that runs those applications. This shift creates three major impacts on your daily operations:

Managing Resource Contention

In the VM world, you are used to allocating static vCPU and RAM, which the hypervisor strictly enforces. With containers, because they share the host kernel, a runaway process in one container can theoretically starve others on the same node. Your role shifts from managing static sizes to managing Resource Limits and Requests. It becomes your responsibility to ensure these are defined, or a single poorly coded app could destabilize your entire production node.

Addressing the Failure Domain

The "failure domain" changes significantly. If a VM experiences a kernel panic or a Blue Screen of Death, only that individual VM is affected and goes down. In a container environment, all containers on a node share the host kernel; therefore, a kernel-level panic takes down every single container on that node. This makes patching and maintaining the Host OS far more critical than before, as a reboot now affects a massive chunk of your production platform rather than just one user. The concept of container live migration isn’t an enterprise-ready reality yet.

Troubleshooting the Ephemeral

We are used to the persistence of VMs, where you can SSH into a box after a crash to check /var/log files. Containers, however, are ephemeral. When they crash, the process and its local logs often vanish entirely. You can no longer rely on manual SSH fixes; instead, you must implement centralized logging and external storage to ensure that critical data survives the death of a process.

Efficiency and Speed: The Infrastructure Advantage

The resource consumption differences between virtual machines and containers are striking. A typical VM requires gigabytes of memory for its guest OS before the application even starts, whereas a container’s base overhead is measured in megabytes. This efficiency can allow you to run 2-6x more applications on the same hardware, translating directly to CAPEX savings and better utilization.

Furthermore, because there is no OS to boot, containers start in seconds rather than minutes. This speed enables infrastructure to be far more responsive, allowing for rapid scaling to meet load spikes and faster CI/CD pipelines.

While containers and Kubernetes may seem massive and complex at first, at their core, they are simply a set of APIs and an orchestrator. The real challenge (or the "magic") happens in its distributed, declarative nature and the variety of external components, like the Container Network Interface (CNI) and Container Storage Interface (CSI) plugins, that you must integrate to create a working instance. For infrastructure administrators, this requires new skills, tools, and ways of working while still leveraging existing knowledge.

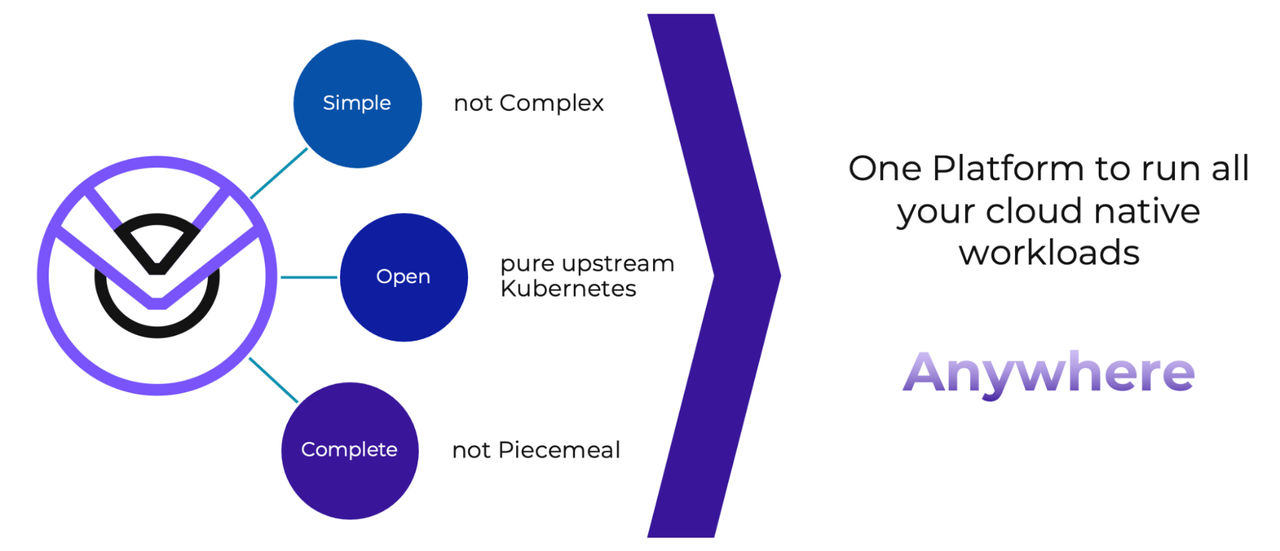

Platforms like the Nutanix Kubernetes Platform (NKP) solution are designed specifically to ease this transition, providing enterprise-grade capabilities on a foundation of pure upstream Kubernetes that preserves your freedom of choice.

Ready to see how NKP can simplify your transition to containers? Request a demo or explore more resources on the Nutanix Kubernetes Platform page.

Stay Tuned for our next blog post, we'll dive deep into container networking and storage—the infrastructure layers you'll be responsible for managing in a Kubernetes environment.

©2025 Nutanix, Inc. All rights reserved. Nutanix, the Nutanix logo and all Nutanix product and service names mentioned are registered trademarks or trademarks of Nutanix, Inc. in the United States and other countries. Kubernetes is a registered trademark of The Linux Foundation in the United States and other countries. All other brand names mentioned are for identification purposes only and may be the trademarks of their respective holder(s). Code samples and snippets that appear in this content are unofficial, are unsupported, and may require extensive modification before use in a production environment. As such, the code samples, snippets, and/or methods are provided AS IS and are not guaranteed to be complete, accurate, or up-to-date. Nutanix makes no representations or warranties of any kind, express or implied, as to the operation or content of the code samples, snippets and/or methods. Nutanix expressly disclaims all other guarantees, warranties, conditions and representations of any kind, either express or implied, and whether arising under any statute, law, commercial use or otherwise, including implied warranties of merchantability, fitness for a particular purpose, title and non-infringement therein.